Interactive and Immersive Mixed Reality experience with Azure Kinect

This fascinating, interactive, immersive 3D game is played by using movements of the human body. Arm and hand movements interact with the game elements that are projected onto a screen. In order to allow this playful experience, the tracking possibilities of the Azure Kinect were used. The game is a truly amazing application of spatial computing.

Project

At the DevOps Fusion 2020 PostFinance wanted to position itself as an attractive and innovative employer for technology-savvy professionals. They wanted an interactive gaming experience for their stand presentation. To impress the DevOps Engineers, something novel and never-before-seen was required. afca. took on the challenge of this project, developing a game that utilizes the latest innovations in spatial computing, taking advantage of the spatial sensor technology of the Microsoft Azure Kinect.

The stand concept was realized in cooperation with nuance Veranstaltungstechnik GmbH experts for exhibition stands. An eye-catching truss construction was to make the PostFinance differ from the surrounding exhibitors in their conventional standard booth designs. When developing the game, special attention was paid to the adaptability of the installation with little effort for other performances

As a result of the Covid-related cancellations of events the game had its premiere only at the DevOps Fusion 2021. PostFinance has since successfully used the game at various trade fairs and events.

Game concept

The back wall of the booth is a screen on which the interactive game is projected. Visitors are automatically “captured” in a specified area of the booth that corresponds to the field of vision of the Azure Kinect. Their arm and hand movements are directly integrated into the game and thus immediately influence the action on the back wall. This way visitors to the stand automatically become interacting parts of the game.

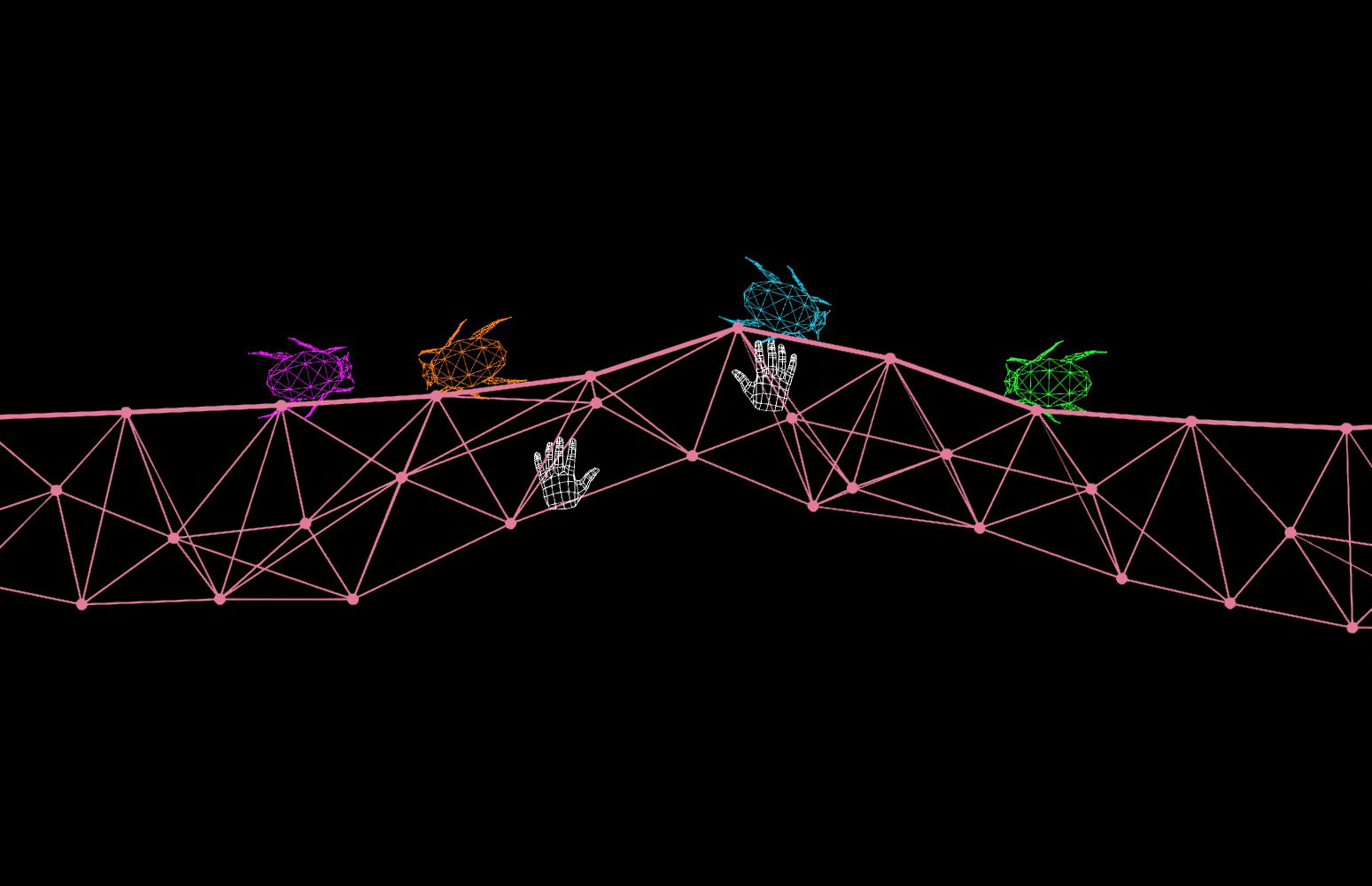

The aim of the game is to move a game figure (a bug) with indicated color into the capture vessel of PostFinance. This is done by interacting with a net string. The thus disappearing beetle will keep reappearing on the net string making the game endless. This allows visitors to join in the game at any point of time. The graphic elements of the game, the beetle as well as the net string, are linked with a current IT campaign. A QR code directs visitors to a website, where they can take part in a raffle.

Technical implementation

The projection device is a short-distance beamer that can be positioned on the rear frame of the booth. The Azure Kinect camera is mounted in such a way that its interaction field covers a specified area in front of the screen. The camera is a state-of-the-art spatial computing developer kit with advanced machine vision capabilities, enhanced AI sensors as well as a set of powerful programming tools (SDKs). The interactive 3D game was realized in the Unity game engine development environment and programmed in C#.

The sensor device recognizes a human body as such in its field of vision. Its locomotor system is divided into different movable units according to the human skeletal structure. These can be tracked individually by the Azure Kinect (bodytracking). The captured spatial motion data (xyz coordinates) are transferred to the game itself. The motion data trigger specific interactions with the game elements. The functioning of the game can be understood easily. Players’ virtual hands are depicted on the screen, where they act as trigger points to deform the virtual net string in order to move the colored beetles.